Quick reference: All 18 examples at a glance

| Company | MVP Type | Year | Budget | Key Result |

|---|---|---|---|---|

| Amazon | Concierge | 1994 | ~$10,000 | $511K revenue in year one |

| Etsy | Single feature | 2005 | ~$30,000 | 10,000 sellers in 6 months |

| Groupon | Piecemeal | 2008 | <$5,000 | $33M revenue in 18 months |

| Zappos | Wizard of Oz | 1999 | Minimal | Acquired by Amazon for $1.2B |

| Single feature | 2004 | $0 | 1M users in 6 months | |

| Internal tool | 2006 | Minimal | 450M+ monthly active users | |

| Single feature | 2010 | Minimal | 1M users in 2 months | |

| Foursquare | Single feature | 2009 | ~$1.35M | 1M users in first year |

| ProductHunt | Piecemeal | 2013 | $0 | Platform built after 170 members in 2 weeks |

| Dropbox | Video | 2007 | Minimal | 75,000 signups overnight |

| Buffer | Landing page | 2010 | ~$500 | First paying customer in 3 days |

| Spotify | Single feature | 2006 | ~$1M | 80%+ beta users became daily users |

| AngelList | 2010 | Minimal | Became leading startup funding platform | |

| Airbnb | Concierge | 2008 | Minimal | Confirmed strangers would pay to share homes |

| Uber | Single feature | 2009 | Small seed | Confirmed premium ride demand in one city |

| Slack | Internal tool | 2013 | Existing budget | $7.1B acquisition by Salesforce |

| Kickstarter | Crowdfunding | 2009 | ~$50,000 | Platform now hosts $7B+ in pledges |

| Netflix | Single feature | 1997 | ~$2.5M | Proved DVD-by-mail demand before streaming |

Successful MVP in software development examples

E-commerce & marketplace MVPs

1. Amazon: Manual fulfillment to validate e-commerce demand

MVP type: Concierge + Landing page

Year: 1994

Initial investment: ~$10,000

Background & ProblemThe assumption being tested: Would people buy products online without seeing or touching them first?

In 1994, Jeff Bezos noticed internet usage growing rapidly and saw an opportunity in online retail. The obvious obstacle was infrastructure: warehouses, inventory, logistics, and fulfillment systems would require millions in capital before a single order was placed. He needed to know whether the demand was real before spending any of it.

His approach was to skip the infrastructure entirely. Bezos built a basic website listing books, took orders, then manually purchased those books from distributors, packed them at home, and drove to the post office himself. The customer saw a functioning bookstore. Behind it was one person running a manual operation.

The results came back fast. Within the first week, orders were coming in from across the US and dozens of countries. Month one brought $20,000 in book sales. Year one hit $511,000. The hypothesis was confirmed: people would buy products online.

What Amazon did next is the part most retellings skip. They did not immediately build warehouses. They iterated on the manual process, gradually automating only the parts that had been validated, expanding to new product categories only after proving each one. The infrastructure came after the demand was confirmed, not before.

What to apply: If your business involves inventory, logistics, or fulfillment, consider whether you can manually complete a handful of real orders before building any of that infrastructure. The cost of ten manual orders is negligible. The cost of building a warehouse for a product nobody wants is not.

2. Etsy: Competing by doing one thing better than the market leader

MVP type: Single feature

Year: 2005

Initial investment: ~$30,000

The assumption being tested: Would crafters leave eBay for a platform built specifically for handmade goods, even with fewer features?

eBay existed and had significant network effects. Robert Kalin and his co-founders were not trying to out-feature it. They identified one specific frustration: eBay’s marketplace was slow, generic, and hostile to the aesthetic that craft sellers and buyers cared about.

The Etsy MVP launched with the minimum required to run a marketplace: product listings, search, checkout, and seller profiles. No forums, no community features, no editorial content. Just a clean, fast-loading place for handmade items to be bought and sold.

The first month brought 300 sellers. Six months in, that was 10,000. What they learned from early users shaped everything that came after: the community and shared values around craft mattered as much as the transaction itself. Forums, maker stories, and seller resources came later, built on feedback from the people who validated the core.

Today Etsy has 7.5 million active sellers and over $13 billion in annual sales.

What to apply: If you are entering a market with an established player, you do not need to match their feature set at launch. Find the one dimension where the incumbent is genuinely failing a specific user segment and build for that gap only.

3. Groupon: A piecemeal MVP with no custom code at all

MVP type: Piecemeal

Year: 2008

Initial investment: <$5,000

The assumption being tested: Would people pre-purchase discounted deals from local businesses they had not visited?

Andrew Mason had a hypothesis about local commerce but no engineering budget to test it properly. His solution was to use tools that already existed. He set up a WordPress blog to post daily deals, collected payments through PayPal, emailed PDF vouchers manually to buyers, and called merchants personally to arrange each deal.

The first deal was 50% off pizza at a restaurant in the same building as his office. Twenty people bought it. That was enough signal to keep going.

Eighteen months later, Groupon had $33 million in revenue. Two years in, the company raised over a billion dollars in funding. None of the infrastructure that would eventually power that scale existed during the first months of operation. It was all built after the model was confirmed.

What to apply: Before building any custom software, ask whether existing tools can simulate the experience well enough to validate demand. WordPress, Airtable, Typeform, Zapier, and Stripe can assemble a surprisingly functional product without writing a line of code. The ceiling of this approach is real, but it rarely matters at the validation stage.

4. Zappos: Wizard of Oz fulfillment to test a counterintuitive hypothesis

MVP type: Wizard of Oz

Year: 1999

Initial investment: Minimal

The assumption being tested: Would people buy shoes online without trying them on first?

The conventional wisdom in 1999 was firm: shoes require physical fitting. Nick Swinmurn did not argue against this logic. He built a test to find out whether it was actually true.

He photographed shoes at local retail stores, listed them on a website called Shoesite.com, and when someone placed an order, he went to the store, bought the shoes at retail price, and shipped them to the customer. He was often selling at or below cost. That was intentional. The goal was not to make money. It was to find out whether people would buy.

They did. Complaints from early customers were about selection, not about the concept of buying shoes without trying them on. The hypothesis was wrong. The opportunity was real. Swinmurn raised capital, built inventory, renamed the company Zappos, and invested in the logistics infrastructure that turned it into a customer service legend. Amazon acquired it in 2009 for $1.2 billion.

What to apply: The Wizard of Oz approach works for any product where the front-end experience can be simulated while the back-end remains manual. The user gets the outcome they came for. You get real behavioral data without building automation you may not need.

Social media & community MVPs

5. Facebook: Artificial constraint as a growth strategy

MVP type: Single feature + First man

Year: 2004

Initial investment: $0

The assumption being tested: Would students use an online directory with social features if it was built specifically for their campus?

Friendster existed and was struggling with scale and performance. Mark Zuckerberg did not try to build a better Friendster. He built something narrower: a directory for Harvard students, with two features. Profile pages and wall posts. Nothing else.

The Harvard-only constraint was not a resource limitation. It was a deliberate choice. Restricting access to one institution created a sense of exclusivity that made the product more desirable when it did expand. Within 24 hours of launch, 1,200 students had signed up, roughly half of Harvard’s undergraduates at the time. Within six months, the platform had a million users across hundreds of college networks.

Each feature that became central to Facebook, the news feed, photos, events, messaging, came after the core was validated. When features were added too quickly, engagement dropped. The lesson was built into how they iterated.

What to apply: Geographic or demographic constraints in an MVP are not weaknesses. Launching to one city, one school, one industry, or one user segment gives you cleaner data and often creates more genuine word-of-mouth than a broad launch to anyone who will listen.

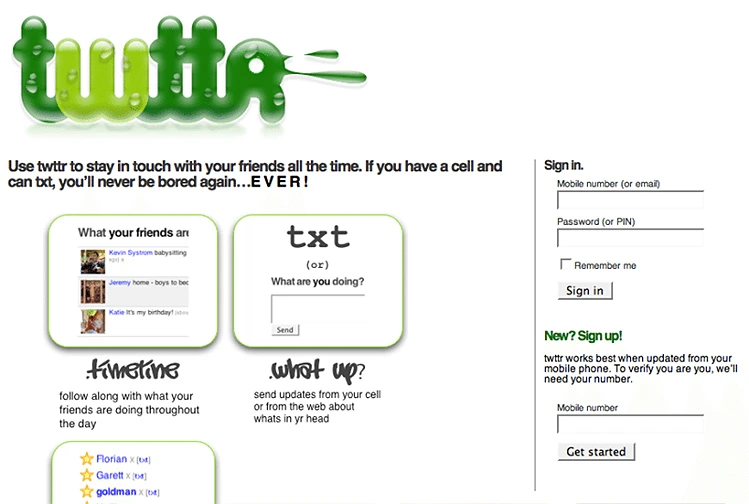

6. Twitter: An internal tool that solved a real problem

MVP type: Internal tool

Year: 2006

Initial Investment: Minimal

The assumption being tested: Would people find value in short, public status updates sent to a group?

Twitter was not built as a product from the start. Odeo, the company it came from, was a podcasting platform that lost its reason to exist when Apple launched iTunes podcasting in 2005. During a hackathon to explore new directions, Jack Dorsey proposed a simple concept: an SMS-based service for sharing short status updates with groups.

The team built it as an internal tool for Odeo employees. What happened next was not part of the plan. Employees started spending significant amounts of their own money on SMS charges to use it. During a San Francisco earthquake, the team used Twitter to communicate in real time faster than any news outlet could. The signal was clear.

Odeo shut down. The founders focused entirely on Twitter. The 140-character limit, a constraint imposed by SMS length limits rather than product design, became the defining characteristic of the platform.

What to apply: If you are trying to validate whether a product solves a real problem, build it for people who have that problem right now, including your own team. Internal tools have a low bar for launch and a high bar for honest feedback.

7. Instagram: One feature, executed without compromise

MVP type: Single feature

Year: 2010

Initial investment: Minimal

The assumption being tested: Would people share more photos if a simple tool made them look significantly better?

Kevin Systrom and Mike Krieger had been working on a location-sharing app called Burbn. It had too many features and unclear product direction. Rather than iterating on a bloated product, they stripped it down to the one thing users actually engaged with: photo sharing with filters.

The launch version had no direct messages, no stories, no explore page, no shopping. Just the ability to take or upload a photo, apply a filter, and share it. iPhone only.

The app attracted 25,000 users on its first day on the App Store, reached one million users within two months, and was acquired by Facebook for one billion dollars eighteen months after launch. Every major feature that followed came from observing how users actually used the stripped-down version and responding to what they asked for.

What to apply: If your product has multiple features and unclear traction, consider what users actually do versus what you built for them to do. The gap between those two things often points to where your real product is.

8. Foursquare: Validating behavior before adding the mechanism that made it addictive

MVP type: Single feature

Year: 2009

Initial investment: ~$1.35M (seed round)

The assumption being tested: Would people voluntarily share their physical location with friends?

Location sharing was a new behavior in 2009 and not an obvious one. Dennis Crowley and Naveen Selvadurai did not know whether people would actually do it. The MVP was simple: a check-in button, a location-based friend list, and a basic venue database. No recommendations, no reviews, no badges.

They launched at SXSW in Austin, where the concentrated density of early adopters gave them fast signal. People checked in. The behavior existed. Once that was confirmed, Foursquare added gamification: badges for check-in patterns, mayorships for frequent visitors. Usage accelerated significantly. The gamification layer would have been noise without first confirming that the underlying behavior was real.

What to apply: Validate the core behavior before layering on engagement mechanics. Gamification, notifications, streaks, and rewards amplify behavior that already exists. They do not create it. If users are not doing the core action without incentives, adding incentives will not fix the underlying problem.

9. ProductHunt: A community validated in 20 minutes with no code

MVP type: Piecemeal

Year: 2013

Initial investment: $0

The assumption being tested: Would people actively engage with a daily curated list of new products?

Ryan Hoover had an idea for a product discovery community but no certainty that the engagement would be there. Before writing any code, he used a link-sharing tool called Linkydink to set up an email-based version of the concept, invited startup friends from his personal network, and promoted the idea on Twitter.

Setup took 20 minutes. Within two weeks, 170 members had joined and were actively sharing and discussing products every day. That was enough signal. Hoover built a proper web platform on the back of that validation. ProductHunt became the primary launch destination for new software products and was eventually acquired by AngelList.

What to apply: A community is one of the hardest things to build and one of the easiest things to test. Before building infrastructure for a community product, find out whether people will show up and participate using the simplest possible setup.

SaaS & Productivity MVPs

10. Dropbox: A video that sold a product that did not exist yet

MVP type: Video

Year: 2007

Initial investment: Minimal

The assumption being tested: Was there large unmet demand for seamless file syncing across devices?

Drew Houston was building a technically complex product. Cloud storage infrastructure, desktop clients, and sync protocols are not things you can fake or manually simulate. A traditional MVP approach did not straightforwardly apply.

His solution was a screencast. He recorded a video demonstrating how Dropbox would work when it was built, showing files dragging into a folder and appearing instantly on another device. He posted it to Hacker News with the title “My YC app: Dropbox – Throw away your USB drive,” including small easter eggs in the video aimed at the Hacker News and Digg communities to encourage sharing.

The beta waitlist went from 5,000 to 75,000 in a single day. Houston now knew two things: the demand was real, and the demo experience was compelling enough to convert at high rates. He built the actual product with that knowledge confirmed.

What to apply: For technically complex products where building even a functional prototype is expensive, a well-produced demonstration video can serve as your market test. The key is that it must show a real experience, not describe it in slides.

11. Buffer: Testing price sensitivity before writing a line of code

MVP type: Landing page

Year: 2010

Initial investment: ~$500

The assumption being tested: Would people pay for a social media scheduling tool, and if so, how much?

Joel Gascoigne did not just want to know whether people were interested in Buffer. He wanted to know whether they would pay, and at what price. He built two landing pages to answer both questions sequentially.

The first page described the product concept and had a sign-up button. He drove traffic from Twitter and startup forums to measure click-through rate on the signup. It was above 15%. Demand signal confirmed.

The second page, reached after clicking sign up, showed three pricing tiers: free, five dollars per month, and twenty dollars per month. Users were asked which tier interested them. The data showed clear willingness to pay for the paid tiers. Business model signal confirmed.

Gascoigne then built the actual product. It launched six weeks later. Buffer had its first paying customer within three days of launch. The landing page experiment had de-risked both demand and pricing before a single feature existed.

What to apply: Separating demand validation from pricing validation, testing them as two sequential questions rather than assuming both are true simultaneously, gives you cleaner data and often reveals that people are willing to pay more than you expected. For a full breakdown of what an MVP typically costs at each stage, see the custom software development costs article of us.

12. Spotify: Proving the technology before negotiating with anyone

MVP type: Single feature

Year: 2006

Initial investment: ~$1M

The assumption being tested: Could streaming technology deliver music fast enough to feel better than piracy, and would people use it if it could?

Daniel Ek’s premise was that music piracy was not driven by a desire to steal but by the fact that legal alternatives were slower and more cumbersome than illegal ones. If you could make legal music genuinely faster and easier than piracy, people would use it.

The Spotify MVP was a desktop app for Windows and Mac, available only in Sweden, with one job: stream music instantly with no buffering. No mobile app, no social features, no playlists, no discovery tools. Just search and instant playback.

More than 80% of beta users became daily active users. The technology worked. The premise held. Ek then spent two years in licensing negotiations with major labels before expanding beyond Sweden and building the feature set Spotify is now known for. The technical proof came first. Everything else followed.

What to apply: If your product’s core value proposition depends on a technical capability that has not been proven at scale, proving that the technology works is your first MVP. No amount of product design solves a problem that the underlying technology cannot actually handle.

13. AngelList: Email as an MVP for a two-sided marketplace

MVP type: Email

Year: 2010

Initial investment: Minimal

The assumption being tested: Was there appetite from both founders and investors for a structured matchmaking service in startup fundraising?

Naval Ravikant and Babak Nivi saw that the fundraising process was opaque and inefficient on both sides. Founders did not know which investors were actually relevant to their stage and thesis. Investors missed deals because they did not have visibility into what was being built.

Their MVP was a series of introduction emails. They used their personal networks to manually connect specific founders with specific investors they believed were a match, tracked which introductions led to conversations and eventually to term sheets, and used that data to understand what good matching actually looked like.

No platform, no algorithm, no automation. Just high-touch manual matching that validated both sides of the marketplace wanted the service. AngelList was built on that validated understanding and became the leading platform for startup fundraising and hiring.

What to apply: For two-sided marketplaces, the hardest problem is the chicken-and-egg dynamic. Manual matchmaking, even at very small scale, lets you validate both sides independently before building the platform that automates the connection.

On-demand and platform MVPs

14. Airbnb: Testing a behavior that seemed implausible

MVP type: Concierge

Year: 2008

Initial investment: Minimal

The assumption being tested: Would strangers pay to sleep in another stranger’s home?

Brian Chesky and Joe Gebbia were behind on rent in San Francisco during a design conference that had filled every hotel in the city. They bought three air mattresses, built a simple website called airbedandbreakfast.com, listed their apartment, and offered breakfast to guests.

Three people booked. The founders learned something immediate and practical from those first guests: what they valued, what made them uncomfortable, and what would make them recommend it to others. The experience of hosting real paying guests in their own home before building any product was what gave the founders the understanding they needed to build the right one.

The MVP validated the core assumption. Strangers would pay. Hosts would open their homes. The risk was manageable on both sides. Everything that Airbnb built after that, verification, reviews, host protection, payments, messaging, was designed around what those first real interactions revealed.

What to apply: Your earliest users are not just customers. They are your most important source of product intelligence. Being physically present for the first few transactions, whether as the host, the fulfillment person, or the support agent, gives you information that no analytics dashboard can replicate.

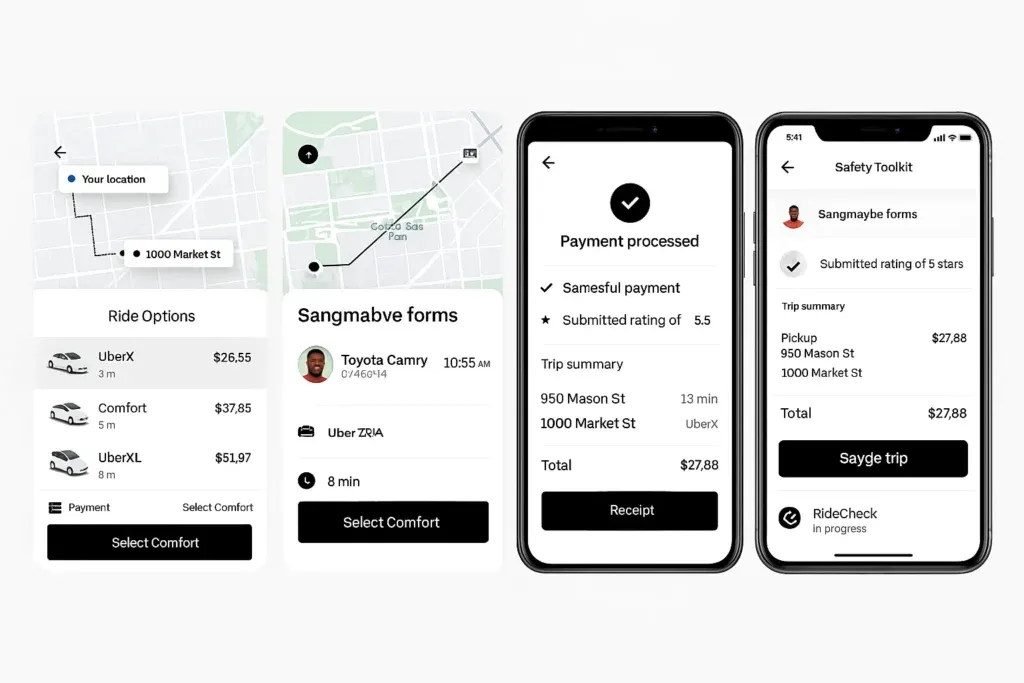

15. Uber: One city, one vehicle type, one platform

MVP type: Single feature

Year: 2009

Initial investment: Small seed round

The assumption being tested: Would people pay a premium to request a black car from their phone and have it arrive reliably?

Travis Kalanick and Garrett Camp launched UberCab in San Francisco only, for black car limousine service only, on iPhone only. The constraints were deliberate. They were not testing whether ride-hailing would work everywhere. They were testing whether the specific experience of requesting a premium vehicle from a phone, without calling a dispatcher, without negotiating price, and without waiting in uncertainty, was something people would pay significantly more for.

It was. The San Francisco market confirmed the hypothesis quickly enough that the expansion model became obvious. Each new city was a new validation of the same core experience, adapted to local conditions.

What to apply: When your product could theoretically work in many markets, start in one market with the fewest variables and the highest concentration of your likely early adopters. Broad simultaneous launches create noisy data. A focused launch in one context gives you clean signal. If you are building across borders with a distributed team, our guide to top IT outsourcing countries covers how to structure that for MVP speed.

16. Slack: A gaming tool that solved a problem nobody had named yet

MVP type: Internal tool

Year: 2013

Initial investment: Existing development budget

The assumption being tested: Could a persistent group messaging tool replace email for internal team communication?

Slack was not built as a product. Stewart Butterfield and his team were building a multiplayer game called Glitch. To coordinate development across a distributed team, they built an internal messaging system. Glitch failed as a game. The messaging tool the team had been using to build it was the most useful piece of software any of them had worked with.

They rebuilt it as a standalone product and launched it in a private beta in 2013. Interest was immediate. Teams adopted it and rarely left. The retention signal was unlike anything Butterfield had seen. Within 24 hours of the public launch, 8,000 companies had signed up. Salesforce acquired Slack in 2021 for $7.1 billion.

What to apply: Internal tools built to solve real problems that a team actually has are often more valuable than products designed for an imagined customer. If you are building software for a specific workflow problem your own team has, the people closest to the problem are also your best early testers and your most honest critics.The assumption being tested: Could a persistent group messaging tool replace email for internal team communication?

17. Kickstarter: A crowdfunding platform that started with one project

MVP type: Crowdfunding platform

Year: 2009

Initial investment: ~$50,000

The assumption being tested: Would people financially back creative projects from strangers before those projects were completed?

Perry Chen, Yancey Strickler, and Charles Adler launched Kickstarter with a single project: a concert in New Orleans. The platform had only the core transaction: a campaign page, a funding goal, and a pledge button. No social features, no recommendation algorithm, no creator tools beyond the basics.

The first project funded. That confirmed both sides of the marketplace wanted to participate. Over the following months, they opened the platform to more creators in limited categories before expanding fully. The Kickstarter platform now hosts over seven billion dollars in total pledges across more than 250,000 successfully funded projects.

What to apply: Crowdfunding is itself an MVP validation tool. If your product is a physical or creative project, a crowdfunding campaign serves dual purposes: market validation and early funding. Real money from real people before you build is the clearest possible demand signal.

18. Netflix: DVD delivery as the MVP for a business that did not yet exist

MVP type: Single feature

Year: 1997

Initial investment: ~$2.5M

The assumption being tested: Would people rent DVDs through the mail rather than driving to a video store?

Reed Hastings and Marc Randolph launched Netflix as a DVD-by-mail service. There was no streaming, no recommendation engine, no original content, no subscription model initially. Just a website where you could order a DVD, have it mailed to you, and mail it back when you were done.

The first version did not even have a subscription. It was pay-per-rental. The subscription model came after the core behavior was validated. Streaming came a decade later, when bandwidth and licensing made it viable. The company that most people associate with streaming started by proving that people would change how they consumed home video if the experience was convenient enough.

What to apply: Your current product may not be your long-term product. Netflix used DVD mail rental to build the customer relationships, recommendation data, and operational knowledge that eventually powered streaming. The MVP was a viable business in its own right while also being preparation for something larger.

What these 18 examples have in common

Reading across these MVP software development examples, a few patterns appear consistently enough to be worth naming.

Each team identified one assumption that would invalidate everything if it was wrong. Amazon’s assumption was that people would buy online without touching products. Zappos’s assumption was that people would buy shoes without trying them on. Buffer’s assumption was that people would pay for scheduling. They tested that assumption first, before anything else. Not a bundle of assumptions. One.

None of them built more than what was required to get a credible answer. Groupon used WordPress. ProductHunt used email. Twitter was a hackathon project. Dropbox was a screencast. The minimum was genuinely minimum, not a polished beta that took six months.

The MVP shaped the product, but it did not determine it. Every company on this list looks radically different today than it did at launch. The MVP was not the product. It was the evidence that made building the product worth doing. For more examples of how companies across industries made the transition from MVP to full custom software, see our roundup of real-world custom software examples.

Failure was built into the design. Each of these teams could have stopped if the data had come back wrong. Some had already failed before the MVP that worked. Odeo failed before Twitter. Burbn failed before Instagram. Glitch failed before Slack. The willingness to treat a negative result as useful information, rather than something to rationalize away, is what distinguishes teams that use MVPs well from teams that go through the motions.

Summary table: MVP types by use case

If you are trying to decide which MVP approach fits your situation, use this as a starting point.

| If you need to validate… | Consider this MVP type | Example |

|---|---|---|

| Whether anyone will pay at all | Landing page with pricing | Buffer |

| Whether a manual service works before automating it | Concierge | Amazon, Airbnb |

| Whether users will complete a specific behavior | Single feature | Instagram, Foursquare, Facebook |

| Whether a technical concept is feasible | Video or prototype | Dropbox |

| Whether a two-sided market has demand on both sides | Email or manual matching | AngelList |

| Whether you can validate without writing code | Piecemeal | Groupon, ProductHunt |

| Whether your own team has a problem worth solving | Internal tool | Slack, Twitter |

| Whether people will financially commit before launch | Crowdfunding | Kickstarter |

Building an MVP and not sure where to start with scoping and technical decisions?

How useful was this post?

Click on a star to rate it!

Average rating / 5. Vote count:

No votes so far! Be the first to rate this post.